WORK IN PROGRESS!!!!! SWAP FUNCTION FOR METHOD

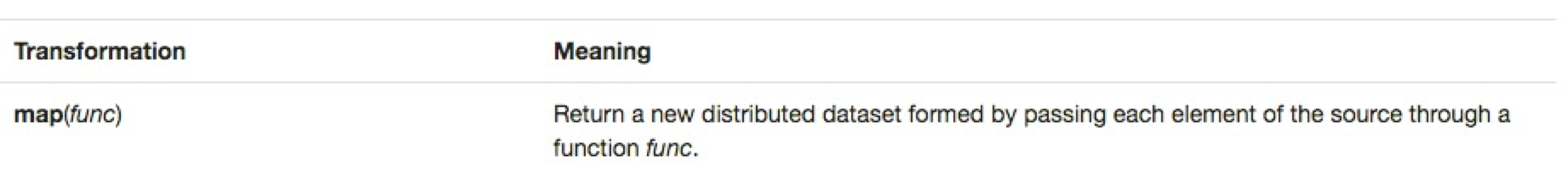

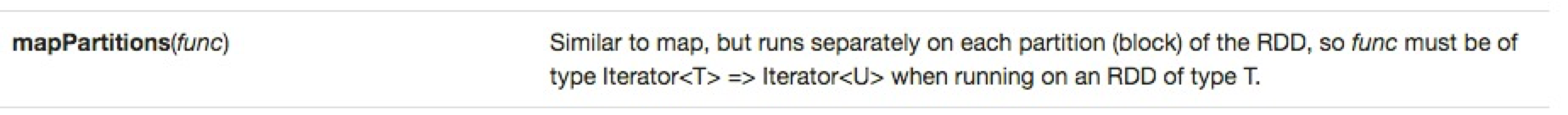

I'm writing this blog post for those learning Spark's RDD programming model and who might have heard that using the mapPartitionmapPartitions() transformation is usually faster than its map() brethren and wondered why. If in doubt... RTFM, which brought me to the following excerpt from http://spark.apache.org/docs/latest/programming-guide.html.

The good news is that the answer is right there. It just might not be as apparent for those from Missouri (you know... the "Show Me State") and almost all of us can benefit from a working example. If you're almost there from reading the description above then https://bzhangusc.wordpress.com/2014/06/19/optimize-map-performamce-with-mappartitions/ might just push you over the "aha" moment. It worked for me, but I wanted to build a fresh example all on my own which I'll try to document well enough for you to review and even recreate if you like.

If you made it this far then you know that Spark's Resilient Distributed Dataset (RDD) is a partitioned beast all its own somewhat similar to how HDFS breaks files up into blocks. In fact, when we load a file in Spark from HDFS by default the number of RDD partitions is the same as the number of HDFS blocks. You can suggest you want the RDD partitioned differently (I'm breaking my example into three partitions) and that itself is a topic for another, even bigger, discussion. If you are still with me then you probably know that "narrow" transformations/tasks happen independently on each of the partitions. Therefore, the well-used map() function is working in parallel on each of the RDD's partition that it is walking through.

That's good news – in fact, that's great news as these narrow tasks are key to performance! The sister mapPartitions() transformation also works independently on the partitions, so what's so special that makes it run better in most cases? Well... it comes down to the fact that map() exercises the function being utilized at a per element level while mapPartitions() exercises the function at the partition level. What does that mean in practice?

It means that if we have 100K elements in a particular RDD partition then we will fire off the function being used by the mapping transformation 100K times when we use map(). Conversely, if we use mapPartitions() then we will only call the particular function one time, but we will pass in all 100K records and get back all responses in one function call.

That means, we could get a big lift in the fact that we aren't exercising the particular function so many times, especially if the function is doing something expensive each time that it wouldn't need to do if we passed in all the elements at once.

Hmmm... if that doesn't make immediate sense, let's do some housekeeping for our example and then circle-back to this thought.

This next little bit simple loads up a timeless Dr Suess story into an RDD that then gets split into another RDD with a single word for each element. I'll explain more in a bit, but let's also cache this RDD into memory to aid our ultimate test later in the blog.

For my testing, I used version 2.4 of the Hortonworks Sandbox. Once you get this started up, SSH into the machine as root, but then switch user to the mktg1 user who has a local linux account as well as an HDFS home directory. If you want to use a brand-new user, try my simple hadoop cluster user provisioning process (simple = w/o pam or kerberos) process. Once there, you can copy the contents of XXXX onto your clipboard and paste it into the vi editor (just type "i" once vi open us and paste from your clipboard then end it all by hitting ESC twice then typing ":" to get the command prompt and "wq" to write & quit the editor) and then copy that file into HDFS.

...

| Code Block | ||||

|---|---|---|---|---|

| ||||

scala> val wordsRdd = sc.textFile("10xselfishgiantGreenEggsAndHam.txt", 3).flatMap(line => line.split(" "))

wordsRdd: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[342] at flatMap at <console>:27

scala> wordsRdd.persist() //mark it as cached

res44res0: wordsRdd.type = MapPartitionsRDD[342] at flatMap at <console>:27

scala> sc.setLogLevel("INFO") //enable timing messages

scala> wordsRdd.take(3) //trigger cache load

16/05/19 18:29:27 23:08:22 INFO DAGScheduler: Job 0 finished: take at <console>:30, took 0.565019 s

res2: Array[String] = Array(I, am, Daniel) |

Just to verify that the cache is helping performance (was 0.565 secs above), let's hit it a few more times to see a must faster and consistent response.

| Code Block | ||||

|---|---|---|---|---|

| ||||

scala> wordsRdd.take(3) 16/05/19 23:11:12 INFO DAGScheduler: Job 1 finished: take at <console>:30, took 0.044692 s res3: Array[String] = Array(I, am, Daniel) scala> wordsRdd.take(3) 16/05/19 23:11:14 INFO DAGScheduler: Job 382 finished: take at <console>:30, took 0.059390020183 s res45res4: Array[String] = Array(EVERY, afternoon,, as) |

(I, am, Daniel)

scala> wordsRdd.take(3)

16/05/19 23:11:17 INFO DAGScheduler: Job 3 finished: take at <console>:30, took 0.033659 s

res5: Array[String] = Array(I, am, Daniel) |

Now that we got some of the housekeeping out of the way, what are we going to do to see and what does it all mean?

c

ave stumbled across the following

...