manually installing hue (on my virtualized 5-node cluster)

I needed to manually install Hue on my little cluster I previousy documented in Build a Virtualized 5-Node Hadoop 2.0 Cluster so I thought I'd document it as I went just in case it worked (and if there were any tweaks from the documentation). The Hortonworks Doc site URL for the instructions I used are at http://docs.hortonworks.com/HDPDocuments/HDP2/HDP-2.0.9.0/bk_installing_manually_book/content/rpm-chap-hue.html.

One of the first things you get asked to do is to make sure Python 2.6 is installed. I ran into the following issue below that suggested I couldn't get this rolling.

[root@m1 ~]# yum install python26 Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirror.dattobackup.com * extras: centos.someimage.com * updates: mirror.beyondhosting.net Setting up Install Process No package python26 available. Error: Nothing to do

I'm pretty sure Ambari already laid this down so a quick double-check on the installed version was done on all my 5 nodes to verify I'm in good shape.

[root@m1 ~]# which python /usr/bin/python [root@m1 ~]# python -V Python 2.6.6

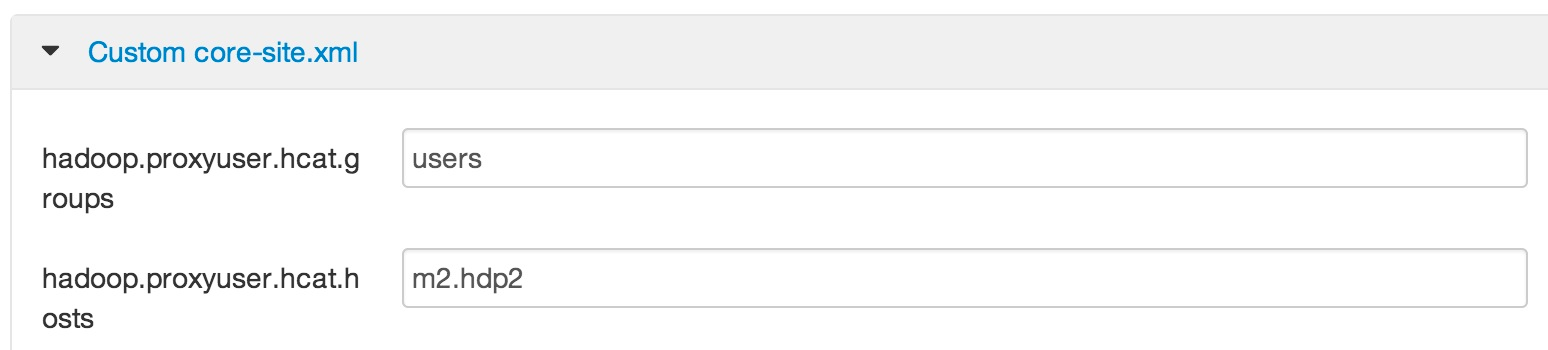

When you get to the Configure HDP page you'll be reminded that if you are using Ambari (like me) to NOT edit the conf files directly. I used vi to check the existing files in /etc/hadoop/conf to see what needed to be done. The single property for hdfs-site.xml was already in place as described. For core-site.xml, the properties starting with hadoop.proxyuser.hcat where already present as shown below.

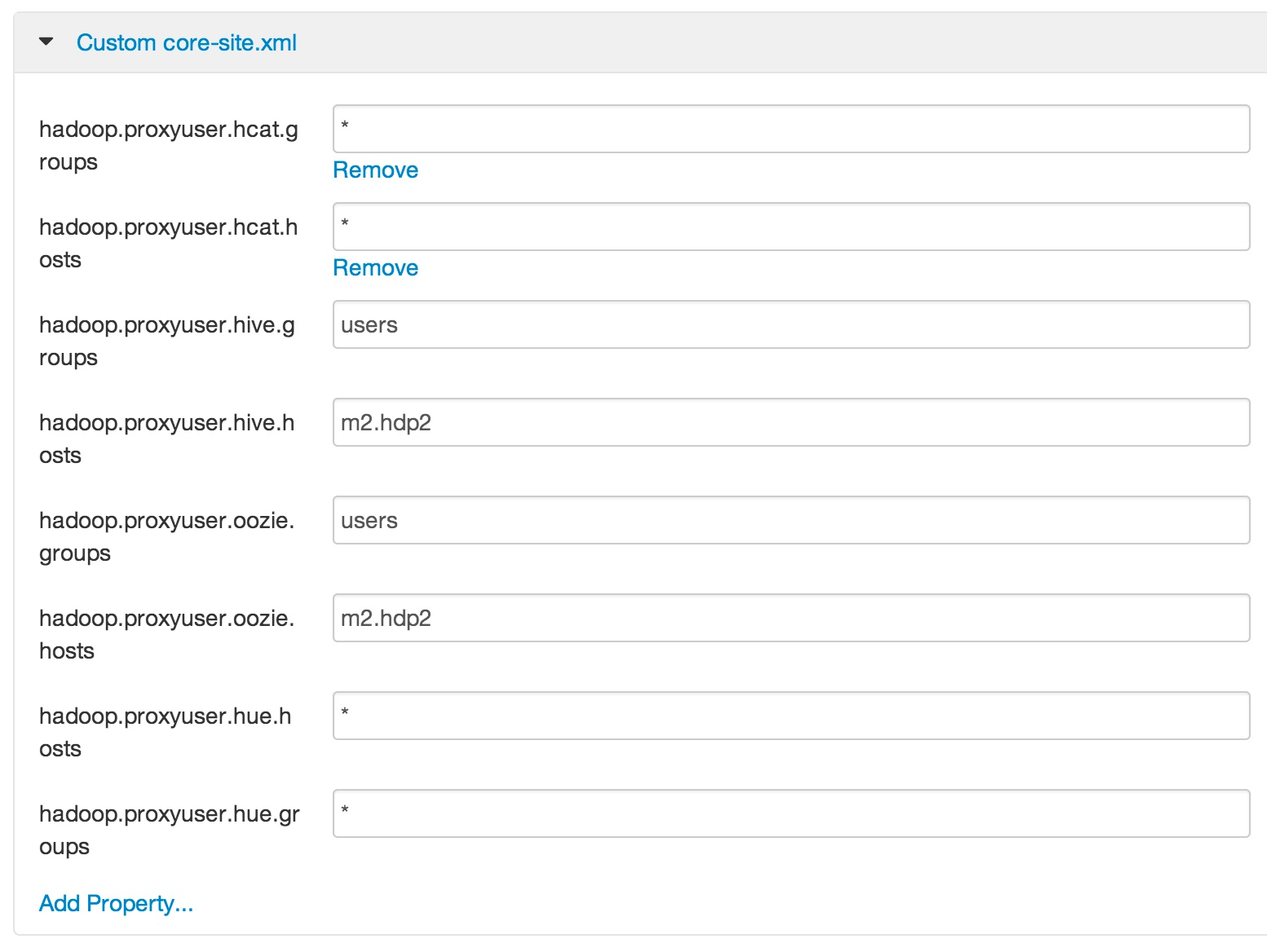

The next screenshot shows I changed them as described in the documentation. The properties starting with hadoop.proxyuser.hue where not present (no surprise!) so I added them as described (and shown below).

I then used Ambari to add the ...hue.hosts and ...hue.groups custom properties for the webhcat-site.xml and oozie-site.xml conf files. That took us to the Install Hue instructions which I decided to run on my first master node and completed without problems. When you get to Configure Web Server steps 1-3 don't really require any action (remember, we're building a sandbox within a machine not a production ready cluster). Step 4 was a tiny bit confusing, so I'm dumping my screen in case it helps.

[root@m1 conf]# cd /usr/lib/hue/build/env/bin [root@m1 bin]# ./easy_install pyOpenSSL Searching for pyOpenSSL Best match: pyOpenSSL 0.13 Processing pyOpenSSL-0.13-py2.6-linux-x86_64.egg pyOpenSSL 0.13 is already the active version in easy-install.pth Using /usr/lib/hue/build/env/lib/python2.6/site-packages/pyOpenSSL-0.13-py2.6-linux-x86_64.egg Processing dependencies for pyOpenSSL Finished processing dependencies for pyOpenSSL [root@m1 bin]# vi /etc/hue/conf [root@m1 bin]# vi /etc/hue/conf/hue.ini ... MAKE THE CHANGES IN STEP 4-B ((I ALSO MADE A COPY OF THE .INI FILE FOR COMPARISON)) ... [root@m1 bin]# diff /etc/hue/conf/hue.ini.orig /etc/hue/conf/hue.ini 70c70 < ## ssl_certificate= --- > ## ssl_certificate=$PATH_To_CERTIFICATE 73c73 < ## ssl_private_key= --- > ## ssl_private_key=$PATH_To_KEY [root@m1 bin]# openssl genrsa 1024 > host.key Generating RSA private key, 1024 bit long modulus .........................................................................++++++ ...................................................................++++++ e is 65537 (0x10001) [root@m1 bin]# openssl req -new -x509 -nodes -sha1 -key host.key > host.cert You are about to be asked to enter information that will be incorporated into your certificate request. What you are about to enter is what is called a Distinguished Name or a DN. There are quite a few fields but you can leave some blank For some fields there will be a default value, If you enter '.', the field will be left blank. ----- Country Name (2 letter code) [XX]:US State or Province Name (full name) []:Georgia Locality Name (eg, city) [Default City]:Alpharetta Organization Name (eg, company) [Default Company Ltd]:Hortonworks Organizational Unit Name (eg, section) []: Common Name (eg, your name or your server's hostname) []:m1.hdp2 Email Address []:lmartin@hortonworks.com [root@m1 bin]#

For sections 4.2 through 4.6 it looks like there was at least one problem (namely hadoop_hdfs_home) so I've dumped my screen again. The following is aligned with the 5-node cluster I did previously.

[root@m1 bin]# cd /etc/hue/conf [root@m1 conf]# vi hue.ini ... MAKE THE CHANGES IN STEP 4.2 - 4.6 ((I ALSO MADE A COPY OF THE .INI FILE FOR COMPARISON)) ... [root@m1 conf]# diff hue.ini.orig hue.ini 70c70 < ## ssl_certificate= --- > ## ssl_certificate=$PATH_To_CERTIFICATE 73c73 < ## ssl_private_key= --- > ## ssl_private_key=$PATH_To_KEY 238c238 < fs_defaultfs=hdfs://localhost:8020 --- > fs_defaultfs=hdfs://m1.hdp2:8020 243c243 < webhdfs_url=http://localhost:50070/webhdfs/v1/ --- > webhdfs_url=http://m1.hdp2:50070/webhdfs/v1/ 251c251 < ## hadoop_hdfs_home=/usr/lib/hadoop/lib --- > ## hadoop_hdfs_home=/usr/lib/hadoop-hdfs 298c298 < resourcemanager_host=localhost --- > resourcemanager_host=m2.hdp2 319c319 < resourcemanager_api_url=http://localhost:8088 --- > resourcemanager_api_url=http://m2.hdp2:8088 322c322 < proxy_api_url=http://localhost:8088 --- > proxy_api_url=http://m2.hdp2:8088 325c325 < history_server_api_url=http://localhost:19888 --- > history_server_api_url=http://m2.hdp2:19888 328c328 < node_manager_api_url=http://localhost:8042 --- > node_manager_api_url=http://m2.hdp2:8042 338c338 < oozie_url=http://localhost:11000/oozie --- > oozie_url=http://m2.hdp2:11000/oozie 377c377 < ## beeswax_server_host=<FQDN of Beeswax Server> --- > ## beeswax_server_host=m2.hdp2 529c529 < templeton_url="http://localhost:50111/templeton/v1/" --- > templeton_url="http://m2.hdp2:50111/templeton/v1/"

The Start Hue directions yielded the following output.

[root@m1 conf]# /etc/init.d/hue start Detecting versions of components... HUE_VERSION=2.3.0-101 HDP=2.0.6 Hadoop=2.2.0 HCatalog=0.12.0 Pig=0.12.0 Hive=0.12.0 Oozie=4.0.0 Ambari-server=1.4.3 HBase=0.96.1 Starting hue: [ OK ]

The instructions then go to Validate Configuration, but since we stopped everything with Ambari earlier it is a great time to start up all the services before going to Hue URL which for me is http://192.168.56.41:8000.

For reasons that will take longer to explain than I want to go into during this posting, when replacing 'YourHostName' in http://YourHostName:8000 to pull up Hue be sure to use a host name (or just the ip address) that all nodes within the cluster can access the node that Hue is running on. Buy me a Dr Pepper and I'll tell you all about it.

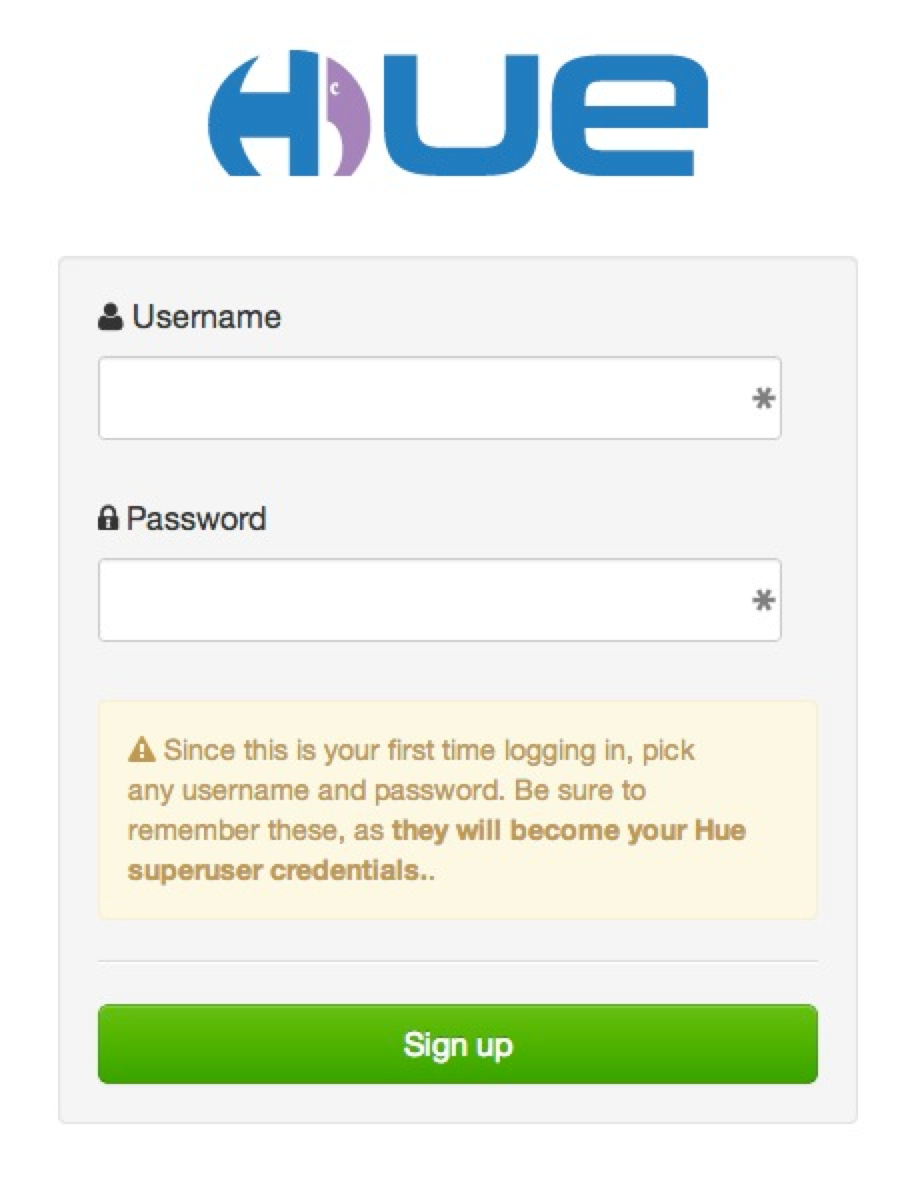

If you configured (or actually left the default configuration as it was) authentication like I did you will get this reminder when Hue comes up for the first time.

To keep my life easy, I just use hue and hue for the username and password. I also ran a dir listing on HDFS before I logged in and after as shown below. Notice that /user/hue was created after I logged in (group is hue as well).

[root@m1 ~]# su hdfs [hdfs@m1 root]$ hadoop fs -ls /user Found 5 items drwxrwx--- - ambari-qa hdfs 0 2014-04-08 19:24 /user/ambari-qa drwxr-xr-x - hcat hdfs 0 2014-01-20 00:23 /user/hcat drwx------ - hdfs hdfs 0 2014-03-20 23:00 /user/hdfs drwx------ - hive hdfs 0 2014-01-20 00:23 /user/hive drwxrwxr-x - oozie hdfs 0 2014-01-20 00:25 /user/oozie [hdfs@m1 root]$ [hdfs@m1 root]$ hadoop fs -ls /user Found 6 items drwxrwx--- - ambari-qa hdfs 0 2014-04-08 19:24 /user/ambari-qa drwxr-xr-x - hcat hdfs 0 2014-01-20 00:23 /user/hcat drwx------ - hdfs hdfs 0 2014-03-20 23:00 /user/hdfs drwx------ - hive hdfs 0 2014-01-20 00:23 /user/hive drwxr-xr-x - hue hue 0 2014-04-08 19:29 /user/hue drwxrwxr-x - oozie hdfs 0 2014-01-20 00:25 /user/oozie

My Hue UI came up fine without any misconfiguration detected so I decided to run through some of my prior blog postings to check things out. I selected how do i load a fixed-width formatted file into hive? (with a little help from pig) since it exercises Pig and Hive pretty quick.

For some reason, I could not get away with using the simple way to register the piggybank jar file shown in that quick tutorial. I had to actually load it to HDFS, I put it at /user/hue/jars/piggybank.jar, then register as shown below and explained in more detail in the comments section of create and share a pig udf (anyone can do it).

REGISTER /user/hue/jars/piggybank.jar; --that is an HDFS path

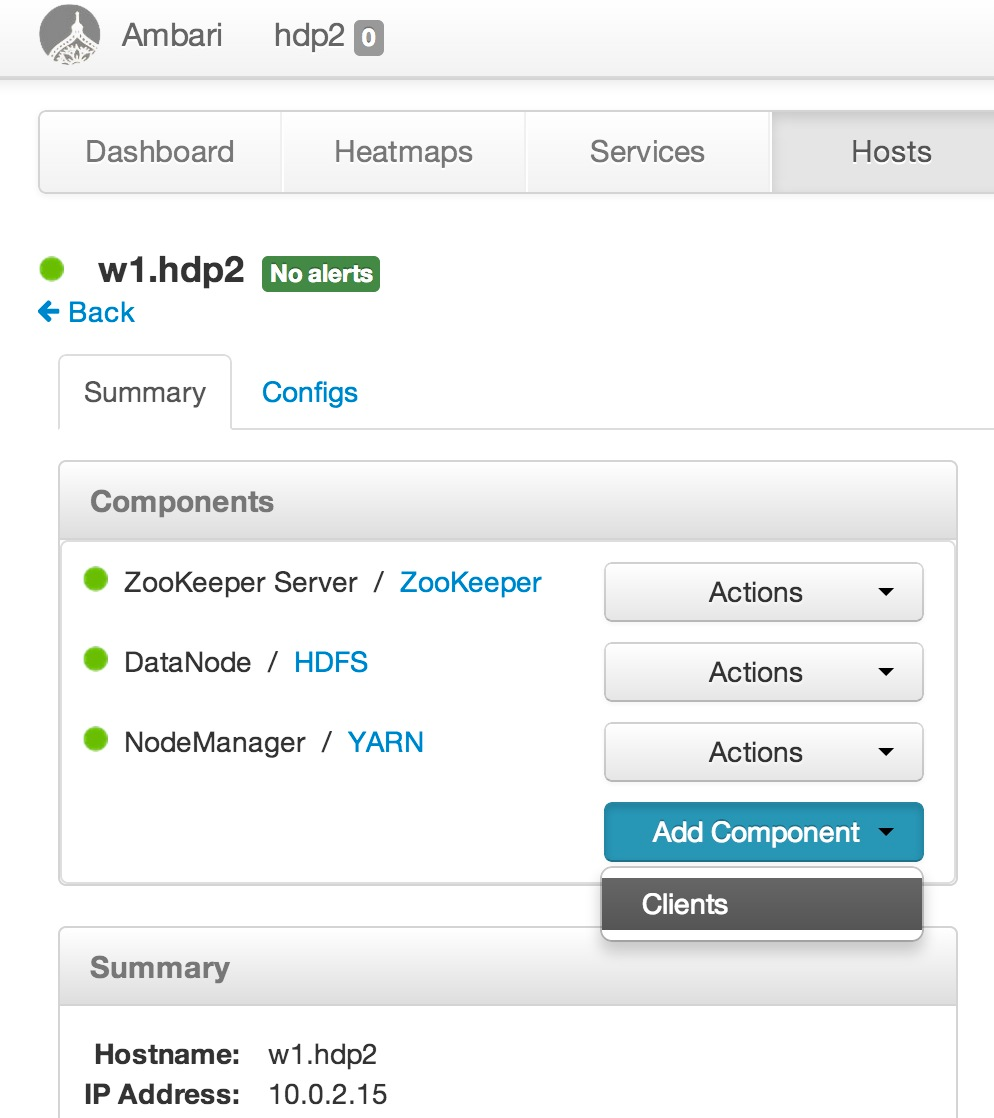

I got into trouble when I ran convert-emp and Hue's Pig interface complained for me to "Please initialize HIVE_HOME". You may not run into this problem yourself as the fix (which I actually got help from Hortonworks Support on as seen in Case_00004924.pdf) was simply to add the Hive Client to all nodes within the cluster (this will be fixed in HDP 2.1). As the ticket said, that would be painful if I had to do for tons of nodes, especially with the version of Ambari I'm using that does not yet allow you to do operations like this one many machines at a time. That said, I just needed to add it to three workers via the Ambari feature show below.

Truthfully, on my little virtualized cluster this takes a few minutes for each host. It will be nice when stuff like this can happen in parallel. Hey... just another reason to add "Clients" to all nodes in the cluster!

All in all, a bit more arduous than it ought to be, but now you have Hue running in your very own virtualized cluster!!