There are plenty of folks who will tell you "Hadoop isn't secure!", but that's not the whole story. Yes, as Merv Adrian points out, Hadoop in its most bare-bone mode does have a number of concerns. Fortunately, like with the rest of Hadoop, software layers upon software layers come together in concert to provide various levels of security shields to address specific requirements.

Hadoop started out with no heavy thought to security. This is because there was a problem to be solved and the "users" of the earliest incarnations of Hadoop all worked together – and all trusted each other. Fortunately for all of us, especially those of us who made career bets on this technology, Hadoop acceptance and adoption has been growing by leaps and bounds which only makes security that much more important. Some of the earliest thoughts around Hadoop security was to simply "wall it off". Yep, wrap it up with network security and only let a few, trusted, folks in. Of course, then you needed to keep letting a few more folks in and from that approach of network isolation came the every present edge node (aka gateway server, ingestion node, etc) that almost every cluster employs today. But wait... I'm getting ahead of myself.

My goal for this posting is to cover how Hadoop addresses the AAA (Authentication, Authorization, and Auditing) spectrum that is typically used to describe system security.

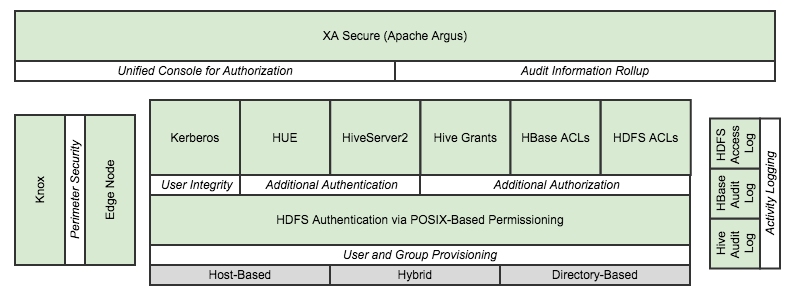

The diagram on the right represents my view of Hadoop security; well, as it is in mid-2014 as there is rapid & continuous innovation in this domain. The sections of this blog posting are oriented around the italicized characteristics in the white boxes.

While the diagram presents layers, it should be clearly stated that each of these components could be utilized without dependencies on any others. The only exception to that is the base HDFS authentication that is always present and is foundational as compared to the other components. The overall security posture is enhanced by employing more (appropriate) components, but additional system complexity could be introduced into the overall system when using a component that has no direct alignment with security requirements.

For the latest on Hadoop security, please visit http://hortonworks.com/labs/security/.

User & Group Provisioning

The root of all authentication and authorization in Hadoop is based on the OS-level users and groups that people & processes are utilizing when accessing the cluster. HDFS permissions are based on the Portable Operating System Interface (POSIX) family of standards which presents a simple, but powerful file system security model: every file system object is associated with three sets of permissions that define access for the owner, the owning group, and for others. Each set may contain Read (r), Write (w), and Execute (x) permissions.

While the appropriate users and groups need to be on every host in the cluster, Hadoop is unaware of how those user/group objects were created. The primary options are as follows.

- Host-Based: This is the simplest to understand, but surely the toughest to maintain and each user and group need to be created individually on every machine. There will almost surely be security concerns around password strength & expiration and auditing as well.

- Directory-Based: In this approach a centralized resource, such as Active Directory (AD) or LDAP, is us to create users/groups and either through a commercial product or internally-development framework an integration with the hosts ensures the seamless interaction to users. This is often addressed with Pluggable Authentication Modules (PAM) integration.

- Hybrid Solution: As Hadoop is unaware of the “hows” of user/group provisioning, a custom solution could also be employed. An example could be PAM integration with AD user objects to handle authentication onto the hosts, but utilizing local groups for the authorization aspects of HDFS.

What are people doing in this space? In my experience, it seems most Hadoop systems are leveraging a hybrid approach such as the example provided for their underlying linux user & group strategy.

Where do I go for more info? http://hadoop.apache.org/docs/r2.4.1/hadoop-project-dist/hadoop-hdfs/HdfsPermissionsGuide.html

User Integrity

With the pervasive reliance on identities as they are presented to the OS, Kerberos allows for even stronger authentication. Users can more reliability identify themselves and then have that identity propagated throughout the Hadoop cluster. Kerberos also secures the accounts that run the various elements in the Hadoop ecosystem thereby preventing malicious systems “posing as” part of the cluster to gain access to the data.

Kerberos can be integrated with corporate directory servers such as Microsoft's Active Directory which helps tons when there are significant number of direct Hadoop users.

What are people doing in this space? In my experience, I'd estimate that about 50% of Hadoop clusters are utilizing Kerberos. This seems to be because either the use case does not require this additional level of security and/or there is a believe that the integration & maintenance activities are too high.

Where do I go for more info? http://hortonworks.com/wp-content/uploads/2011/10/security-design_withCover-1.pdf

Additional Authentication

The right combination of secure users & groups, along with appropriate physical access points described later, provide a solid authentication model for users taking advantage of Command-Line Interface (CLI) tools from the Hadoop ecosystem (i.e. Hive, Pig, MapReduce, Streaming, etc). Additional access points will need to be secured as well. These access points could include the following.

- HDP Access Components

- HiveServer2 (HS2): Provides JDBC/ODBC connectivity to Hive

- Hue: Provides a user-friendly web application to the majority of the Hadoop ecosystem tools to complement the CLI approach.

- 3rd Party Components: Tools that sit “in front” of the Hadoop cluster may use a traditional model where they connect to the Hadoop cluster via a single “system” account (ex: via JDBC) and then present their own AAA implementations.

Hue and HS2 each have multiple authentication configuration options, to include various approaches to impersonation, that can augment, or even replace, whatever approach is being leveraged by the CLI tooling environment. Additionally, 3rd party tools such as Business Objects will provide their own AAA functionality to manage existing users and utilize a system account to connect to Hive/HS2.

What are people doing in this space? Generally, it seems to me that teams are configuring authentication in these access points to be aligned with the same authentication that the CLI tools are using. Some organizations with a more narrowly-focused plan for utilization of their cluster are "locking down" access to the data from first-class Hadoop clients (FS Shell, Pig, Hive/HS2, MapReduce, etc) to only the true exploratory data science and software development communities and taking an approach from the application server playbook – having 3rd party BI/reporting tools use a "system account" to interact with relational-oriented data via HS2 and leveraging the rich AAA abilities of these user-facing tools.

Where do I go for more info? https://cwiki.apache.org/confluence/display/Hive/Setting+Up+HiveServer2 and https://github.com/cloudera/hue/blob/master/desktop/conf.dist/hue.ini

Additional Authorization

The POSIX-based permission model described earlier will accommodate most security needs, but there is an additional option should the security authorization rules become more complex than this model can handle. HDFS ACLs (Access Control Lists) have surfaced to accommodate this need. This feature became available in HDP 2.1 and is inherently available thus can be taken advantage of when the authorization use case demands it.

The long-time “data services” members of the HDP stack, HBase and Hive, also allow additional ACLs to be set on the “objects” that are present in their frameworks. For Hive, this is presented as the ability to grant/revoke access controls on tables. HBase provides authorization on tables and column families. Accumulo (another “data services” offering from HDP that Mercy is currently not planning on leveraging) extends the HBase model down to the ability to control cell-level authorization.

T

As a result of Hortonworks’ acquisition of XA Secure, the addition of a unified console for authorization across the ecosystem offerings has become available. Additional research can be done to determine if this software that Hortonworks is working to introduce as an incubating Apache project is applicable for Mercy’s needs

he additional ACL abilities can become useful as Mercy’s applications and expertise mature, but the recommendation is to first address data access controls via the base POSIX permission model as this will the first line of defense for CLI access to the file system and with ecosystem tools like Pig. Attention needs to be paid to this level of authorization especially considering the approach of limiting access on data, not the tools.

Activity Logging

HDFS has a built in ability to log access requests to the filesystem. This provides a low-level snapshot of events that occur and who did them. These are human-readable, but not necessarily reader-friendly. They are detailed logs that can themselves be used as a basis for reporting and/or ad-hoc querying with Hadoop frameworks and/or other 3rd party tools.

Hive also has an ability to log metastore API invocations. Additionally, HBase offers its own audit log. To pull this all together into a single pane of glass, Hortonworks offers XA Secure. This software layer pulls this dispersed audit information into a cohesive user experience. The audit data can be further broken down into access audit, policy audit and agent audit data, giving granular visibility to auditors on users’ access as well as administrative actions within this security portal.

This feature set, coupled with the single pane of glass for authorization rights, seems to be a solid fit for Mercy who, by nature of the data being stored, will require this level of administrative controls. Additional investigations are warranted to ensure XA Secure will meet the end goals of the auditing requirements that are present and additional hardware will need to be secured to run this web tier service.

Mercy should ensure that appropriate levels of auditing information is being produced and maintained to support any requirements or desired reporting opportunities

Perimeter Security

Establishing perimeter security has been a foundational Hadoop security approach. This is simply viewed as having limited access into the HDP cluster itself and utilizing an “edge” node (aka gateway) as a host users and systems can utilize directly and then have all other actions fired from this gateway into the cluster itself.

The Knox Gateway is a recent addition to the HDP stack and provides a software layer intended to perform this perimeter security function. Not all operations are fully supported yet with Knox to have it completely replace the need for the traditional edge node (ex: HS2 access only supported for JDBC; ODBC functionality still lacking).

Mercy could benefit from additional investigations into Knox at this time, but would need to provision additional hosts and wall off the Knox Gateway web server farm with firewalls similar to the network architecture diagram below.

KNOX PICTURE

The traditional edge node also often is satisfying the ingestion node requirements that are in place in many models that need a landing zone for data before being persisted into HDFS. Mercy’s current investment in in traditional edge nodes is a meaningful one

Data Encryption

Data encryption can be broken into two primary scenarios; encryption in-transit and encryption at-rest. Wire encryption options exist in Hadoop to aid with the in-transit needs that might be present. HDP has many options available to protect data as it moves through Hadoop over RPC, HTTP, Data Transfer Protocol (DTP), and JDBC.

For encryption at-rest, there are some open source activities underway, but HDP does not inherently have a baseline encryption solution for the data that is persisted within HDFS. There are several 3rd party solutions available (including Hortonworks partners) that specifically target this requirement. Custom development could also be undertaken, but the absolute easiest mechanism to obtain encryption at-rest is to tackle this at an OS or hardware level.

Mercy will need to determine what, if any, of the available encryption options provide the right trade-off of simplicity and security. This decision will surely be impacted by what other security components and practices are employed.

Add Comment